Anthropic is suing the Trump administration, asking federal courts to reverse the Pentagon’s decision designating the artificial intelligence company a “ supply chain risk ” over its refusal to allow unrestricted military use of its technology.

Anthropic filed two separate lawsuits Monday, one in California federal court and another in the federal appeals court in Washington, D.C., each challenging different aspects of the Pentagon’s actions against the company.

The Pentagon last week formally designated the San Francisco tech company a supply chain risk after an unusually public dispute over how its AI chatbot Claude could be used in warfare.

RELATED STORY | Trump directs all government agencies to stop using Anthropic's AI tools

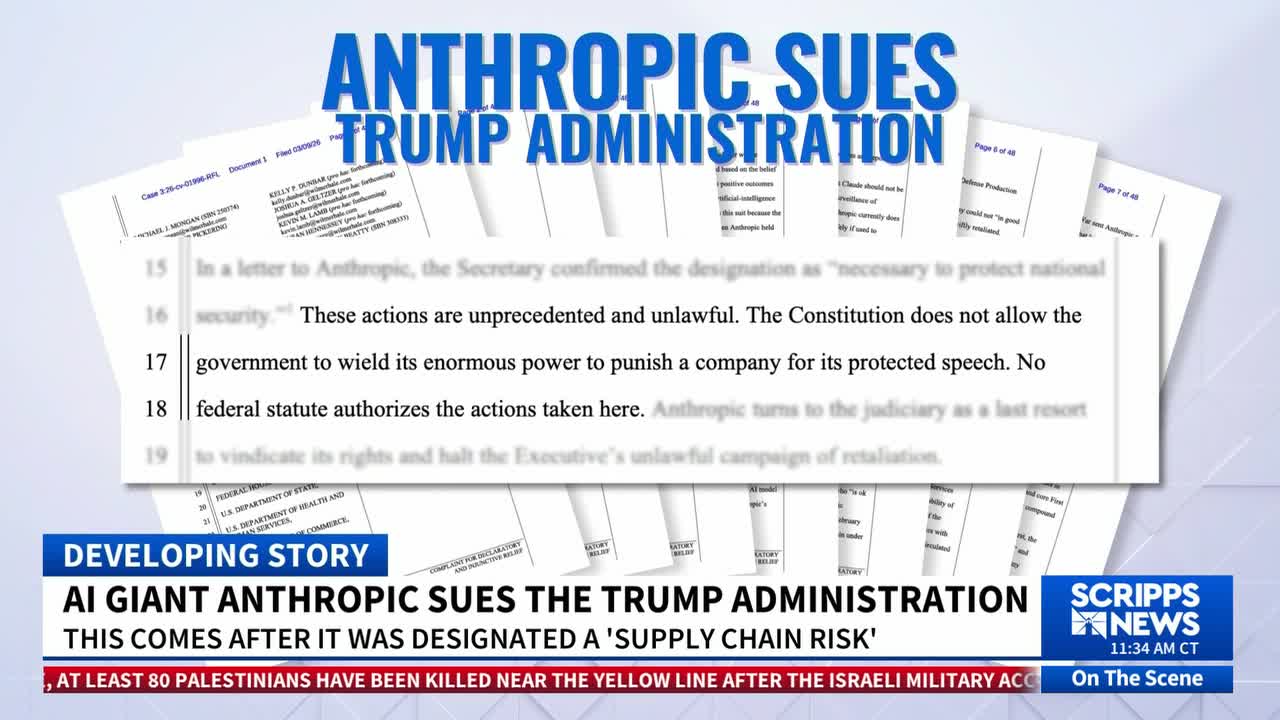

“These actions are unprecedented and unlawful," Anthropic's lawsuit says. "The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech. No federal statute authorizes the actions taken here. Anthropic turns to the judiciary as a last resort to vindicate its rights and halt the Executive’s unlawful campaign of retaliation.”

"Anthropic is alleging in its court documents that it is being retaliated against for its decisions related to its expression and association rights," Jennifer Huddleston, Senior Tech Policy Fellow at the Cato Institute told Scripps News in an interview. "There are also First Amendment implications, for the government either forcing [Anthropic] to design its product in a certain way, forcing it to carry to respond to certain content, as well as forcing it to potentially change is values."

The Defense Department declined to comment Monday, citing a policy of not commenting on matters in litigation.

Anthropic said it sought to restrict its technology from being used for two high-level usages: mass surveillance of Americans and fully autonomous weapons. Defense Secretary Pete Hegseth and other officials publicly insisted the company must accept “all lawful uses” of Claude and threatened punishment if Anthropic did not comply.

Designating the company a supply chain risk cuts off Anthropic's defense work using an authority that was designed to prevent foreign adversaries from harming national security systems. It was the first time the federal government is known to have used the designation against a U.S. company.

President Donald Trump also said he would order federal agencies to stop using Claude, though he gave the Pentagon six months to phase out a product that’s deeply embedded in classified military systems, including those used in the Iran war.

Anthropic's lawsuit also names other federal agencies, including the departments of Treasury and State, after officials ordered employees to stop using Anthropic’s services.

Even as it fights the Pentagon’s actions, Anthropic has sought to convince businesses and other government agencies that the Trump administration’s penalty is a narrow one that only affects military contractors when they are using Claude in work for the Department of Defense.

IN CASE YOU MISSED IT | Amid rising power bills, Anthropic vows to cover costs tied to its data centers

Making that distinction clear is crucial for the privately held Anthropic because most of its projected $14 billion in revenue this year comes from businesses and government agencies that are using Claude for computer coding and other tasks. More than 500 customers are paying Anthropic at least $1 million annually for Claude, according to a recent investment announcement valued the company at $380 billion.

Anthropic said in a statement Monday that “seeking judicial review does not change our longstanding commitment to harnessing AI to protect our national security, but this is a necessary step to protect our business, our customers, and our partners."

Until recently, Anthropic was the only of its tech industry peers approved to supply its AI model to classified military systems. The dispute has led the Pentagon to look to shift Claude's work to Google's Gemini, OpenAI's ChatGPT and Elon Musk's Grok.

Conversely, the fight has boosted Anthropic's reputation among some customers and tech workers who sided with the company's refusal to budge to pressure from the Trump administration. Amodei's moral stance was further distinguished when his bitter rival, OpenAI CEO Sam Altman, sought to replace the Pentagon's Claude with ChatGPT in a move Altman later admitted was rushed and seemed opportunistic.

Consumer downloads of Claude surged, lifting its popularity for the first time over better-known ChatGPT and Gemini.

How companies set guardrails also continues to have repercussions in the competition to retain AI industry talent. OpenAI's head of robotics, Caitlin Kalinowski, resigned over OpenAI's Pentagon deal.

"This wasn't an easy call, " Kalinowski wrote on social media over the weekend. "AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got."

RELATED STORY | Claude tops iPhone app downloads after Pentagon blacklists its maker, Anthropic

Another group of nearly 40 leading AI developers at OpenAI and Google, including Google's chief scientist and AI research division head Jeff Dean, filed a legal brief Monday supporting Anthropic.

"National security is not served by reckless designations of the military's American technology partners as a 'supply chain risk' or the suppression of public discourse on AI safety," said the filing from the workers who said they were acting in their personal capacities.

Huddleston says support like this indicates there's an awareness that this situation between Anthropic and the government is bigger than just one company.

"We're at a time where we're having a significant debate over what an AI policy framework should look like," Huddleston explained. "Typically we've seen the Trump administration take a very light touch approach to AI and AI innovation. This particular incident signals a pretty dramatic change from that approach."

![SNG_Digital_Ad_480x360_CTA[13].jpg](https://ewscripps.brightspotcdn.com/86/a2/ba7c24d445c6bc7c85d9e749207a/sng-digital-ad-480x360-cta13.jpg)